Business

AI cybersecurity capabilities require urgent international cooperation, AI godfather Bengio says

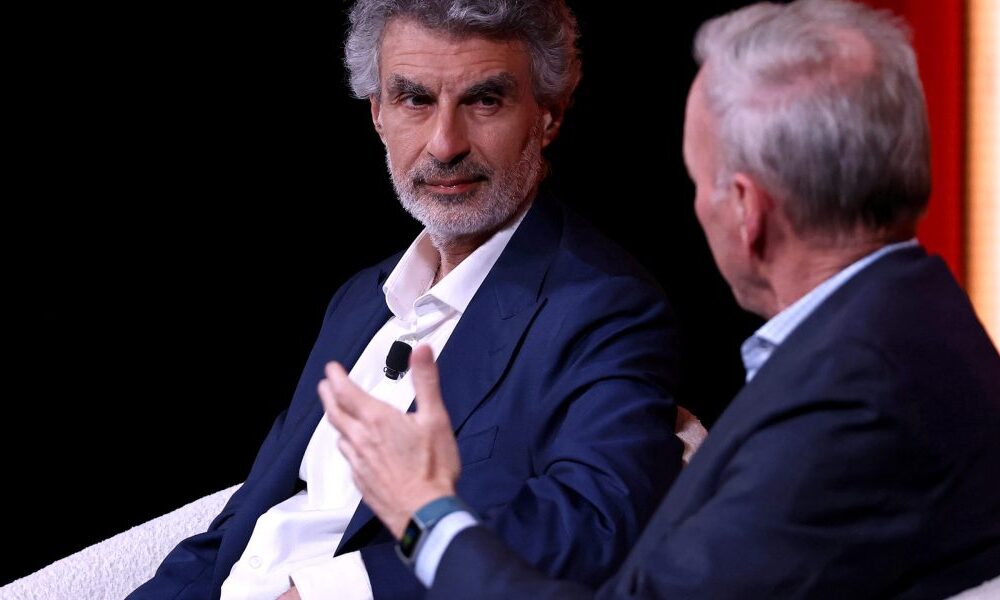

Yoshua Bengio, a computer scientist considered one of the “godfathers of AI” for his help in pioneering the deep learning systems that underpin today’s AI models, has been warning about the risks of the technology he helped to create for years. Now, he says new models like Anthropic’s Mythos demonstrate why international institutions urgently need to work together to address AI’s potential dangers.

Anthropic’s newest model, Claude Mythos, is said to represent a major step forward in cybersecurity, identifying thousands of previously unknown “zero-day” vulnerabilities. Zero-days are bugs in software that are unknown to the programmers who have created that software which could enable hackers to bypass security controls and potentially steal vital data.

However, the company has said that because these capabilities are dual-use—and could enable sophisticated cyberattacks capable of disrupting critical global infrastructure—it is only releasing the system to a small group of firms to give them a head start in securing vital systems.

That initial group of companies Anthropic chose to share Mythos with were all American-based technology firms whose software underpins a lot of the world’s critical systems. The company has also briefed the U.S. government on the technology and is in the process of beginning to provide some U.S. government departments and agencies with access to the model.

While some have praised the company’s caution in opting for a highly-circumscribed release of Mythos, the decision has raised uncomfortable questions about the concentration of power in the hands of just a single U.S. company. Anthropic alone decided with whom it would share Mythos. That has left many businesses and governments excluded from that initial cohort begging for access so they too can safeguard their systems. The situation has hammered home to many why responsibility for AI governance needs to be shared much more broadly and internationally.

“It doesn’t make sense that private individuals are deciding the fate of infrastructure for everyone else,” Bengio said in an interview with Fortune. “What about all the companies and all the countries that didn’t get access?”

Bengio, who has won the Turing Award, considered computer science’s equivalent of the Nobel Prize, is hardly the only one urgently asking that question. The Bank of England, for example, pressed Anthropic for access to Mythos for U.K. banks, publicly announcing that the company had assured it these institutions would begin to get access to the model this coming week. Discussions at the IMF and World Bank spring meetings, currently taking place in Washington, were unexpectedly dominated by concerns over Mythos’ capabilities. Policymakers warned that systems like Mythos could expose weaknesses across the global banking system, while regulators and executives—particularly in Europe—said they had yet to gain access to the model or fully understand the scale of the vulnerabilities it has uncovered.

For many outside the U.S., Mythos is likely to accelerate an already burgeoning desire for “AI sovereignty”—a term which generally refers to having AI capabilities and infrastructure that are not dependent on companies and governments located outside that country. Many places are particularly wary of being overly-dependent on American tech at a time when the U.S. government has become a less reliable ally and has shown a willingness to weaponize supply chain bottlenecks to achieve other policy objectives. There is also concern about being beholden to just a handful of American tech CEOs.

Meanwhile in Washington, the U.S. government is moving to secure its own access to the powerful model. In a memo reviewed by Bloomberg, the White House Office of Management and Budget told Cabinet departments this week that it is setting up protections to allow federal agencies—including Defense, Treasury, Commerce, Homeland Security, Justice, and State—to begin using a version of Mythos, with more details expected “in the coming weeks.”

The push comes despite an ongoing legal fight between Anthropic and the Pentagon, which earlier this year declared the company a supply chain threat over a dispute about AI safeguards. (Anthropic has been challenging that designation in court.) According to a report from Axios, Anthropic CEO Dario Amodei is scheduled to meet White House chief of staff Susie Wiles on Friday in an effort to resolve the on-going dispute.

Bengio is urging far greater international coordination in response to the fresh cybersecurity risks, including the creation of a regulatory body similar to the Food and Drug Administration to oversee the development and deployment of advanced AI systems. He argued that governments—particularly the U.S.—should place clearer obligations on companies developing these models to ensure their technologies do not inadvertently harm critical infrastructure in other countries, and that oversight of such high-stakes decisions cannot be left to private actors alone.

“There needs to be an agency really in charge of overseeing these kinds of decisions,” he said. “As the power of AI continues to grow, this question of international commitment becomes pressing. There’s no reason that it’s going to limit itself to attacking U.S. infrastructure or U.S. citizens. So this has to be an international affair.”

The open-source question

Bengio also said an agreement with China needed to be part of any meaningful global response. The U.S. and China are locked in an aggressive race for AI supremacy.

While Bengio estimated that leading Chinese AI models are likely lagging their U.S. counterparts in raw capabilities by roughly six months, he stressed that the gap does little to reduce the underlying risk.

China is also making rapid progress in open-source models—systems where the underlying model parameters and code are made publicly available—which Bengio warned could ultimately pose an even greater danger than powerful systems like Mythos.

Unlike proprietary models, these open-source systems can be downloaded, modified, and run by anyone. Bengio said that means the safety guardrails companies build in—such as filters designed to block malicious requests—can simply be stripped away by users, leaving little to prevent misuse.

As models become more capable at identifying and exploiting software vulnerabilities, he warned that releasing them openly could hand powerful cyber capabilities directly to bad actors.

The concern isn’t limited to open-source AI. Bengio warned that the broader tradition of open-source software—long considered a pillar of internet security—is also being reshaped by these capabilities.

For decades, open-source software—where code is publicly available—has been seen as more secure, because it allows more developers to inspect and fix vulnerabilities. But highly capable AI systems can now scan that same public code at scale to identify weaknesses far faster than humans, potentially turning widely used open infrastructure into a prime target. While Bengio, a long-time advocate of open-source, said open systems still offer important transparency and democratic benefits, in an era of AI-assisted cyber offense, they can also become a serious liability.